Category: Magic Windows and Mixed up Realities

Magic Windows: Final

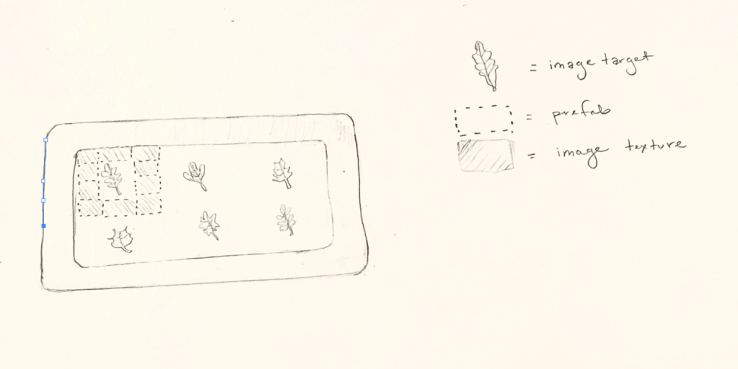

Little sleepy plant friends ❤ For this project / sketch I kept playing with AR Foundations place multiple objects example. Thinking of our class conversation on animism and some of the examples shown in the class lecture on augmenting objects. Would be better as an object / image tracking build.

Ideal Functionality:

When you tap a plant a dialogue bubble pops up and its facial emotion changes.

Also would like to try instantiating the same object with different textures, similar to what Hayk showed us in class last week with his Augmenting the urban space. That way there could be a linear dialogue being clicked through, or could toggle from asleep to awake? https://forum.unity.com/threads/how-to-instantiate-the-same-object-with-different-textures.269030/ Would also like to play with transparency layers https://forum.unity.com/threads/make-object-cube-transparent.495314/

Current Functionality:

Right now its more like a sticker, where you tap the screen a little below the pot to have the prefabs appear ( a zzz dialogue box) and set of sleeping eyes). Since the angle is based on where your phone is at time of ar session launch, the stickers wont always be at the right perspective when used. Would be better to have it centered around object recognition? Have had a couple issues with using images as materials? They seem to be getting reversed. I think I also left the UI example on that helps guide user through movement for better plane detection.

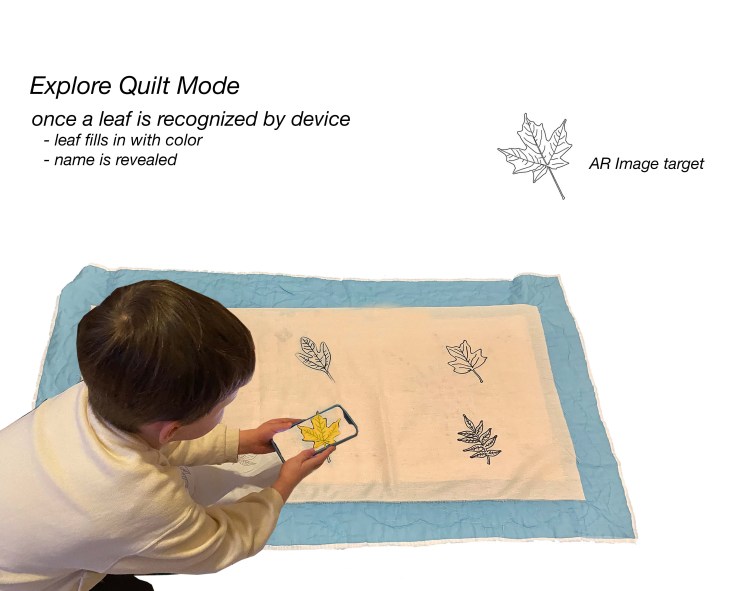

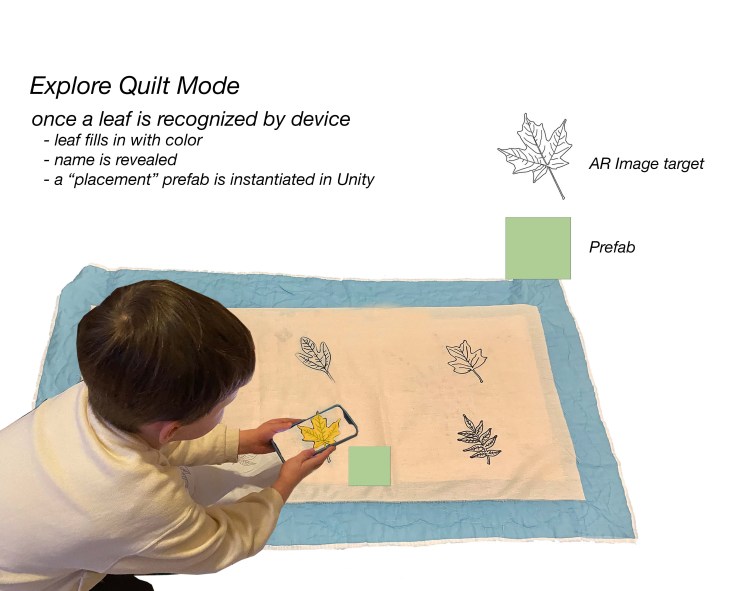

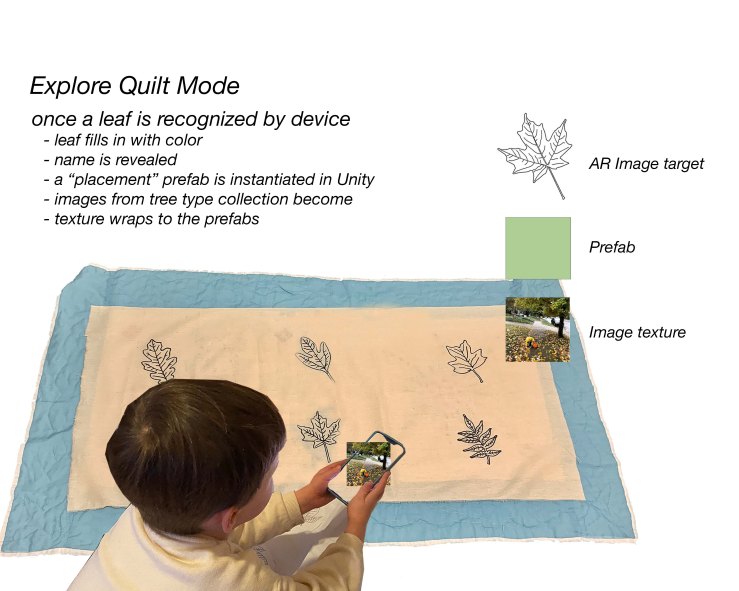

Misc Quilt Tests

Also played around some with images & stitching appear on the quilt as a simple test. This also uses the AR foundation Place multiple objects tutorial. Would like to try it for image targets (either the AR foundation example or starting with Vuforia for tests). For Some reason the textures were wrapping upside down? Need to take a closer look and make sure i didnt get lost in my XYZ system

Magic Windows: Augmenting the urban space

Test w/ cones

Original hello world test – plane detection & point cloud

For this week we were to explore using AR Foundation and augment urban space. I was able to get the class hello world example to my phone with Rui’s help and a couple other tests but wasn’t quite able to get at my initial ideas. ❤ Maybe after office hours tomorrow : )

Things i looked into:

= Multiple image trackers, reference libraries and mutable reference libraries

Wanted to augment the sonic space with subway train logo markers. Where if it recognizes the signage and starts playing various songs written about the NYC trains, like Tom Waits’ Downtown train or Beastie Boys Stop That Train.

Was able to get it to the stage where it recognized my images in the reference library, next steps would be to have it trigger audio once tracked.

3.4.20 – Understanding ARfoundation Image Tracking

After working through some examples / tutorials last night, I decided to sift back through the AR Tracked Image Manager documentation to see about the following:

- multiple targets via an XRReferenceImageLibrary

- encountered issues when sifting through the ARfoundation example via GitHub ❤ mostly worked though! Was having trouble showing the last 3 I added

- dynamic + modifiable / mutable libraries in runtime

- how to dynamically change the image library live via ios camera or camera roll (most likely through a MutableRuntimeReferenceImageLibrary)

Helpful References:

- Experiments in AR ITP class documentation

- Unity ARFoundation documentation – Trackables -> Image Tracking -> tracked Image Manager

- The wonderful Dilmer – Unity3d AR Foundation Playlist (Thank you Maya & Lydia!)

Looking through the ARfoundation Trackables / ImageTracking Documentation:

- AR Tracked Image Manager

- The tracked image manager will create

GameObjects for each detected image in the environment. Before an image can be detected, the manager must be instructed to look for a set of reference images compiled into a reference image library. Only images in this library will be detected

- The tracked image manager will create

- Reference Library

XRReferenceImageLibraryRuntimeReferenceImageLibrary- A

RuntimeReferenceImageLibraryis the runtime representation of anXRReferenceImageLibrary - You can create a

RuntimeReferenceImageLibraryfrom anXRReferenceImageLibrarywith theARTrackedImageManager.CreateRuntimeLibrarymethod

- A

SwiftUI: Image picker to texture add

Unity-> Xcode-> Ios build Troubleshooting

https://circuitstream.com/blog/setup-arkit/

ARkitplugin has been deprecated in 2019 / look into arfoundation

–> this tutorial might not be as helpful now.

Look into these two playlists:

Exploring AR/SDK examples

This weekend I hope to explore a range of AR / SDK examples

1. Saving and Loading World Data / Apple Developer

This example can be downloaded and opened into Xcode to export to a ios device. Requires Xcode 10.0, iOS 12.0 and an iOS device with A9 or later processor.

ARKit developer references

This weekend will be exploring a range of SDK examples. Began a spreadsheet to keep track of process. Will bullet out the info here from ARKits Apple Developer Page:

Helpful links:

- ARWorldMap / importance of saving & loading world data

- Saving and Loading World Data.

- Understanding World Tracking

Essentials

- choosing which camera feed to augment

- verifying device support & user Permission

- managing session lifecycle and tracking quality

- class ARSession

- class ARConfiguration

- class ARAnchor

Camera ( Get details about a user’s iOS device, like its position and orientation in 3D space, and the camera’s video data and exposure. )

QuickLook ( The easiest way to add an AR experience to your app or website.)

- Previewing a Model with AR Quick Look

- class ARQuickLookPreviewItem

- Adding Visual Effects in AR Quicklook

Display ( Create a full-featured AR experience using a view that handles the rendering for you)

class ARViewA view that enables you to display an AR experience with RealityKit.

class ARSCNViewA view that enables you to display an AR experience with SceneKit.

class ARSKViewA view that enables you to display an AR experience with SpriteKit.

WorldTracking (Augment the environment surrounding the user, by tracking surfaces, images, objects, people, and faces.)

Discover supporting concepts, features, and best practices for building great AR experiences.

A configuration that monitors the iOS device’s position and orientation while enabling you to augment the environment that’s in front of the user.

A 2D surface that ARKit detects in the physical environment.

- Tracking and Visualizing Planes

Detect surfaces in the physical environment and visualize their shape and location in 3D space.

class ARCoachingOverlayViewA view that presents visual instructions that guide the user.

- Placing Objects and Handling 3D Interaction

Place virtual content on real-world surfaces, and enable the user to interact with virtual content by using gestures.

class ARWorldMapThe space-mapping state and set of anchors from a world-tracking AR session.

- Saving and Loading World Data

Serialize a world tracking session to resume it later on.

- Ray-Casting and Hit-Testing

Find 3D positions on real-world surfaces given a screen point.

Magic Windows: The Poetics of Augmented Space

Lev Manovich, The Poetics of Augmented Space // http://manovich.net/content/04-projects/034-the-poetics-of-augmented-space/31_article_2002.pdf

“Going beyond the ‘surface as electronic screen paradigm’, architects now have the opportunity to think of the material architecture that most usually preoccupies them and the new immaterial architecture of information flows within the physical structure as a whole. In short, I suggest that the design of electronically augmented space can be approached as an architectural problem. In other words, architects along with artists can take the next logical step to 28 28 consider the ‘invisible’ space of electronic data flows as substance rather than just as void – something that needs a structure, a politics, and a poetics. “